Unlocking the Secrets of AI: Understanding AI Explainability Techniques

Artificial Intelligence (AI) has revolutionized numerous aspects of our daily lives, from predictive text on our smartphones to complex decision-making systems in healthcare and finance. While AI has shown remarkable accuracy and efficiency, it is often criticized for being a 'black box,' particularly when it comes to complex models like deep learning and large language models (LLMs)

What are AI Explainability Techniques?

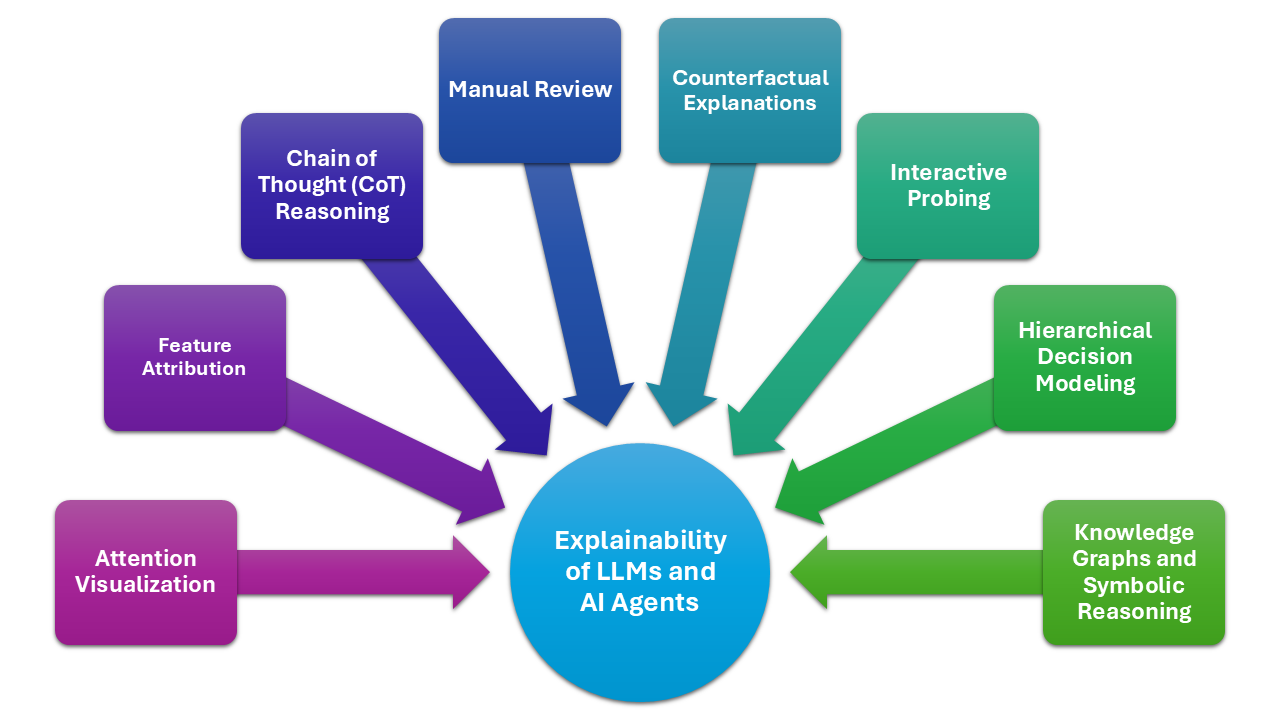

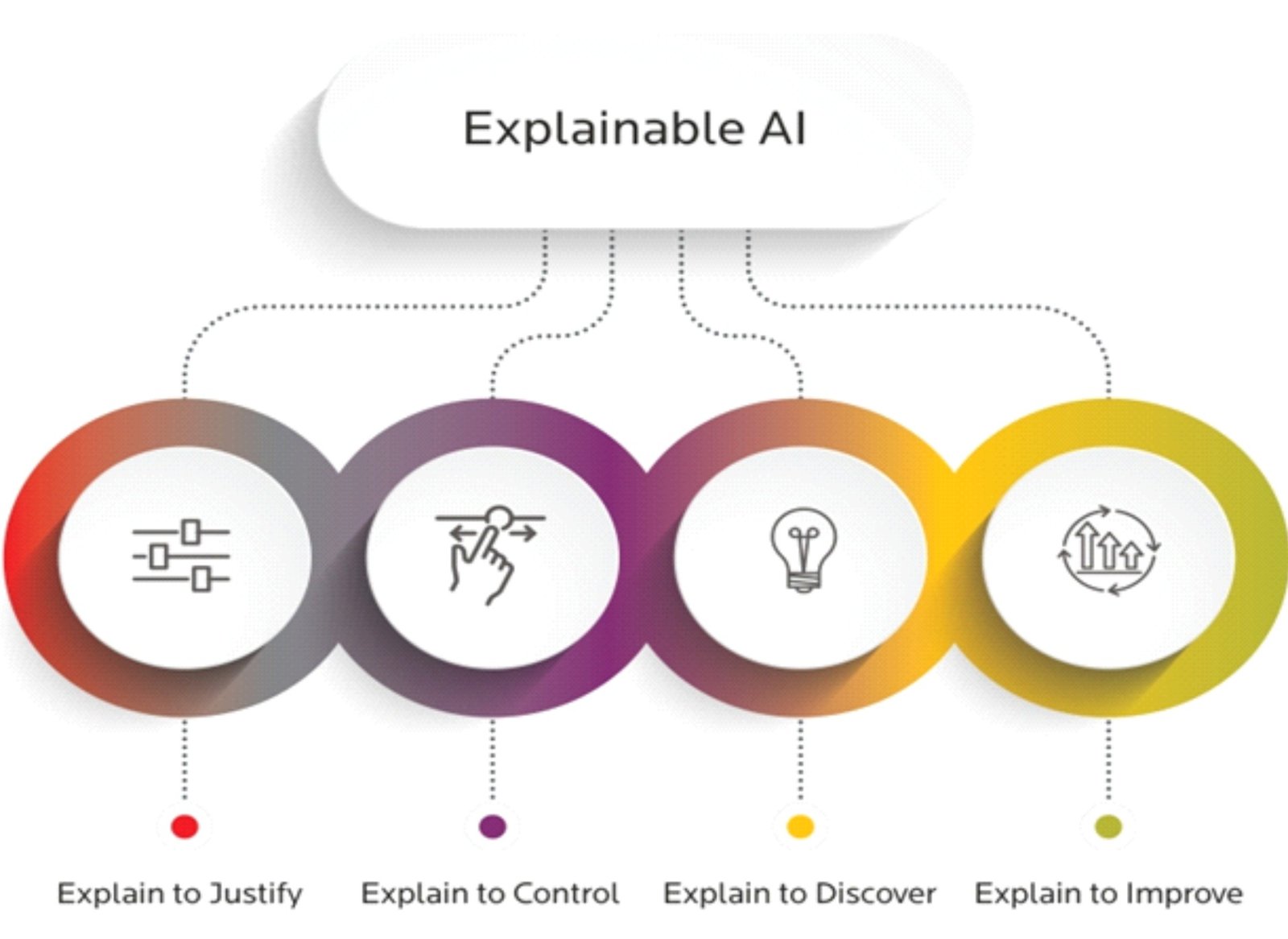

Explanation in AI refers to a collection of procedures and techniques that enable machine learning algorithms to produce output and results that are understandable and reliable for human users. Explainable AI is a key component of the fairness, accountability, and transparency (FAT) machine learning paradigm and is frequently discussed in connection with deep learning

Explainable AI techniques involve providing insights into how and why a particular AI model made a specific decision. This helps build trust and confidence in AI systems, especially in high-stakes applications like finance, healthcare, and transportation. By making AI decisions more interpretable, organizations can ensure that their models are fair, transparent, and responsible

Classification of AI Explainability Techniques

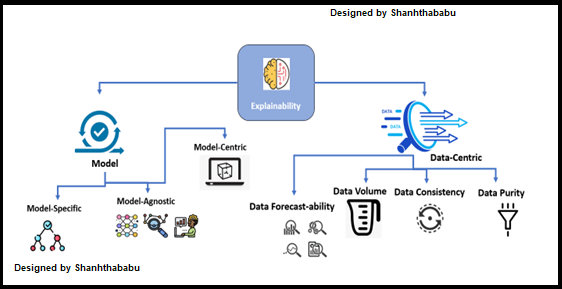

A recent review of XAI techniques presented a hierarchical categorization system, dividing explainability techniques into four axes: (i) data explainability, (ii) model explainability, (iii) post-hoc explainability, and (iv) assessment of explanations. This framework provides a comprehensive understanding of the various approaches to explaining AI decisions and highlights the importance of selecting the right techniques for a specific application

Here's a breakdown of the four axes:

-

Data Explainability

- Domain knowledge: Understanding the domain and the specific data features

- Feature selection: Selecting relevant features to explain the model's behavior

-

Model Explainability

- Model interpretability: Understanding how the model works and its internal workings

- Model visualization: Visualizing the model's structure and parameters

-

Post-hoc Explainability

- Local Interpretable Model-Agnostic Explanations (LIME): Generating a simplified model that approximates the behavior of the original model

-

Assessment of Explanations

- Model performance evaluation: Assessing the performance and fairness of the AI model

- Human evaluation: Eliciting human feedback on the quality and usefulness of the explanations

Benefits of AI Explainability Techniques

By investing in AI explainability, organizations can realize numerous benefits, including

Real-world Applications of AI Explainability Techniques

Explainable AI has a wide range of applications, including

- Healthcare: Explainable AI can be used to develop accurate diagnostic models, predict patient outcomes, and recommend personalized treatments

- Finance: AI explainability can help mitigate risk by identifying potential biases and errors in financial decision-making models

- Transportation: Explainable AI can enhance safety and autonomy in self-driving cars by providing transparent and interpretable decision-making processes

Conclusion

AI explainability is a crucial aspect of making AI systems more trustworthy, transparent, and accountable. By investing in AI explainability techniques, organizations can unlock the full potential of AI and build robust, responsible, and transparent systems that improve decision-making and efficiency

As we continue to push the boundaries of AI innovation, it's essential to prioritize AI explainability and build systems that are not just intelligent but also explainable and reliable